Navigating the Risks of AI Skill Ecosystems in Development

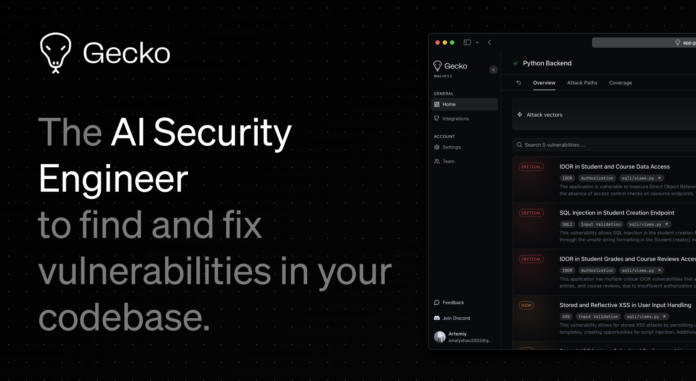

The integration of AI skills into developer tools is rapidly changing the landscape. However, with this convenience comes significant security risks. Here’s what you need to know:

-

Common AI Skills: Tools like Claude Code, Cursor, and Codex CLI utilize SKILL.md files to function. Skills are easily added from marketplaces like ClawHub, but this can introduce vulnerabilities.

-

Security Findings:

- 13.4% of skills on ClawHub have critical security issues (Snyk’s ToxicSkills).

- Conventional scanners miss threats embedded in test files because they focus solely on SKILL.md content.

-

The Attack Chain:

- Attackers can publish seemingly innocuous skills that execute malicious code via test files when added to a developer’s repo.

Mitigations:

- Immediate Actions: Implement test runner exclusions for

.agents/directories in Jest and Vitest configurations. - Registry Awareness: Encourage ClawHub to flag skills containing test files.

Understanding these risks empowers developers to use AI safely.

🔗 Share your insights and experience in the comments! Let’s thrive in AI securely!