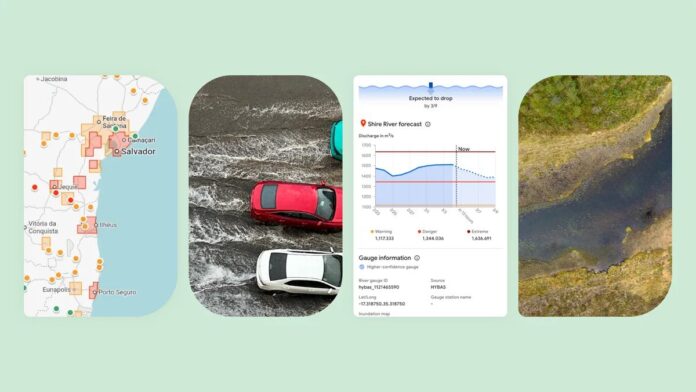

Google researchers have utilized the Gemini large language model to analyze 5 million news articles, creating a geo-tagged dataset of 2.6 million flood events. This dataset, named Groundsource, addresses crucial gaps in flash flood forecasting, particularly in areas with limited meteorological infrastructure. By training a Long Short-Term Memory neural network on this dataset, researchers generated flash flood probabilities for urban areas across 150 countries via the Flood Hub platform, enhancing data sharing with emergency response agencies.

Gila Loike, a Google Research product manager, highlighted the innovative use of language models for this purpose. Despite its benefits, the model has limitations—operating with low resolution over 20-square-kilometer areas, lacking the precision of established systems like the U.S. National Weather Service. Juliet Rothenberg from Google’s Resilience team emphasized that aggregating extensive reports can help fill data gaps. This pioneering approach aims to expand datasets for other environmental phenomena in the future, addressing the challenge of data scarcity in geophysics.

Stay updated on the latest in data and technology by subscribing to our newsletter!

Source link