Unlocking the Power of Chunking in AI: Why It Matters

In the realm of AI and large language models (LLMs), chunking serves as a crucial technique. Here’s why chunking matters:

- Introduction of RAG: Retrieval-Augmented Generation (RAG) provides LLMs with relevant external information to enhance accuracy.

- The Importance of Context: Efficient chunking ensures LLMs process concise, meaningful inputs, improving response quality.

- Avoiding Information Overload: By segmenting large datasets into manageable chunks, systems prevent context loss and enhance retrieval quality.

Innovations in Chunking:

- Semantic Chunking: This advanced approach maximizes essential information while minimizing context interruptions.

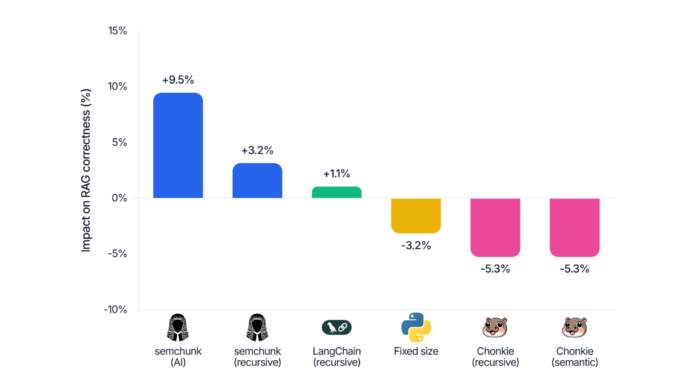

- semchunk Algorithm: Focuses on preserving syntactic structure, creating hierarchical knowledge graphs for enriched contextual understanding.

Why Should You Care?

The type of chunking algorithm can drastically impact the correctness of AI responses, especially in the legal domain—where precise data access is vital.

Join the conversation! Share your thoughts on chunking and its implications in AI below!