🚀 Unveiling SysMoBench: Revolutionizing AI Modeling of Complex Systems

SysMoBench is a groundbreaking benchmark aimed at assessing generative AI’s capacity to model intricate concurrent and distributed systems. While the paper dates back to January 2026, the rapidly evolving AI landscape has already rendered models like Claude-Sonnet-4 and GPT-5 somewhat outdated, especially with the arrival of Claude 3.5 Opus and OpenAI’s Codex.

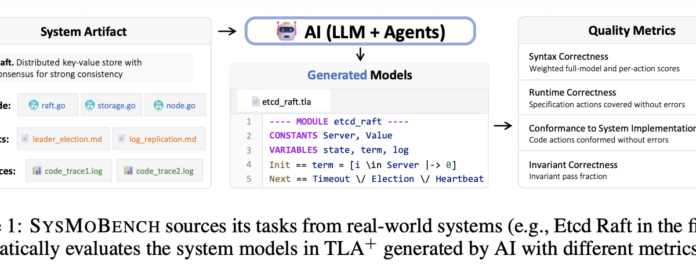

Key Highlights:

- Core Distinctions: The paper delineates algorithms from system modeling, emphasizing that effective models are critical for verifying system code through robust testing.

- AI Challenges: It critiques generative models for frequently introducing syntax errors and inadequately managing temporal reasoning, revealing inherent weaknesses in complex systems.

- Innovative Metrics: SysMoBench employs rigorous evaluations, including:

- Syntax correctness

- Runtime correctness

- Invariant correctness

- Conformance measurement

Despite its strengths, the paper also emphasizes the need for clearer community engagement to enhance SysMoBench’s value.

💡 Join the discussion! What do you think about AI’s potential in system modeling? Share your thoughts below!