Navigating the Future of AI Security: Claude Auto Mode vs. Grith

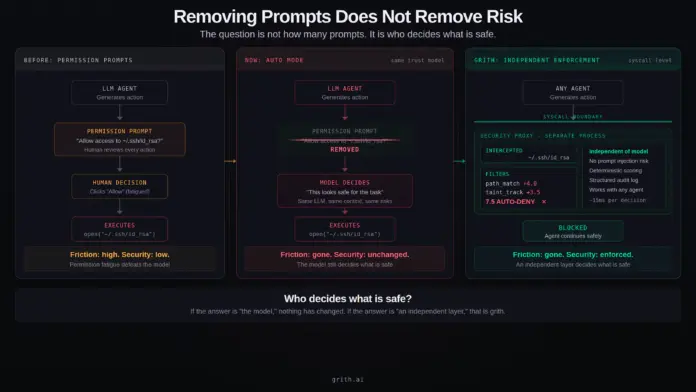

Claude’s newly introduced Auto Mode promises a smoother developer experience, but does it genuinely enhance security? Here’s the key breakdown:

-

Streamlined Experience: Auto Mode minimizes permission prompts, allowing for uninterrupted coding sessions. However, it shifts the decision-making power entirely to the AI model.

-

Underlying Risks:

- Auto Mode leaves the trust architecture unchanged, where model judgment remains the security layer.

- Prompt injections can easily manipulate decisions, leading to potential security breaches—fast-tracking developers toward risks rather than safeguarding them.

-

A Standout Solution: Grith eliminates the model from the trust boundary entirely. Instead of relying solely on LLM judgment to authorize actions:

- Actions are intercepted and analyzed through a security proxy,

- Decisions about safety can’t be influenced by AI’s reasoning.

Are we sacrificing security for efficiency? With the rise of autonomous AI agents, security enforcement must evolve.

🌟 Join the conversation! Share your thoughts on AI security architecture and explore how Grith sets a new standard.