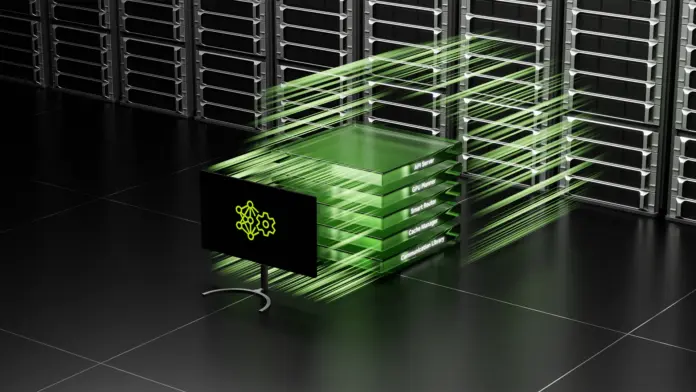

As large language model (LLM) workloads increase in complexity, traditional monolithic serving architectures reach performance limits. Disaggregated serving solves this by separately managing the inference pipeline’s stages—prefill, decode, and routing—as independent services, enhancing scalability and resource utilization. This approach reduces GPU underutilization and allows for tailored optimization resources for each stage’s unique computational demands.

The deployment of disaggregated inference on Kubernetes involves scheduling strategies, including gang and hierarchical gang scheduling, to optimize performance by colocating pods with high-bandwidth links. Tools like NVIDIA Dynamo and the Grove operator provide frameworks for managing resource allocation and scaling, ensuring that these stages operate efficiently according to the workload demands.

Ultimately, utilizing disaggregated architectures fosters better control over LLM performance, creating opportunities for enhanced scaling strategies vital for meeting modern AI inference needs. For best practices, follow Kubernetes resources and attend KubeCon to learn more about the evolving landscape of AI orchestration.

Source link