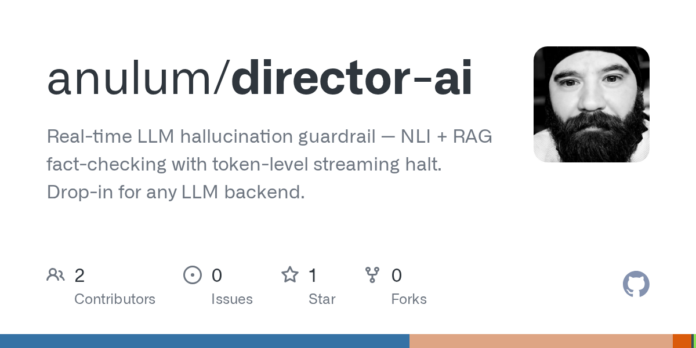

🚀 Transforming LLM Safety with Director-AI

In the evolving landscape of AI, ensuring coherent and factual outputs is paramount. Enter Director-AI: a revolutionary tool that provides real-time hallucination guardrails for language models.

Key Features:

- Token-Level Streaming Halt: Monitors output coherence token-by-token, immediately halting generation if quality degrades.

- Dual-Entropy Scoring: Combines NLI contradiction detection with RAG fact-checking against your own secure knowledge base.

- Customizable Knowledge Base: Easily ingest and validate your data, from PDFs to custom texts, stored securely in ChromaDB.

Benefits:

- Enhance performance with improved coherence.

- Reduce misinformation risks in generated content.

- Customize settings to meet your specific needs.

Now is the time to elevate your AI applications. Explore Director-AI today, and lead the way in responsible AI innovation! Share your thoughts below and let’s drive the conversation forward! 💬✨