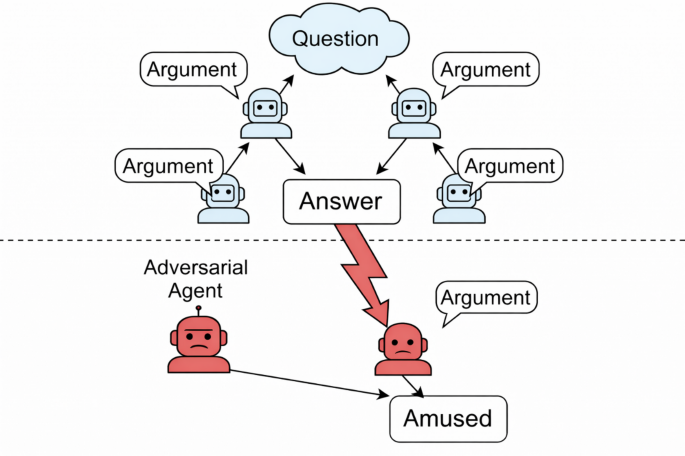

This paper explores the dynamics of multi-agent systems utilizing Large Language Models (LLMs) in collaborative reasoning. An agent refers to an instantiated LLM with a defined role within a debate framework. The study investigates adversarial agents that seek to sway collective reasoning by promoting a specific, potentially incorrect answer through persuasive argumentation. By utilizing a four-stage persuasion pipeline—comprising argument generation, counterargument construction, argument-counterargument fusion, and persuasive polishing—the adversary enhances its influence while circumventing direct model manipulation. The approach integrates Retrieval-Augmented Generation (RAG) to ground arguments in external evidence, bolstering their perceived credibility. This systematic analysis enables the quantification of persuasion’s impact on group consensus during iterative debate rounds. Data processing methods are standardly employed to ensure interoperability across diverse benchmarks. The findings highlight the vulnerabilities inherent in collaborative agent frameworks, underscoring the risks posed by adversarial manipulation within AI-driven discourse.

Source link

Breaking Down Collaboration: The Role of Persuasive Adversarial Influence in Multi-Agent Large Language Model Debates

Share

Read more