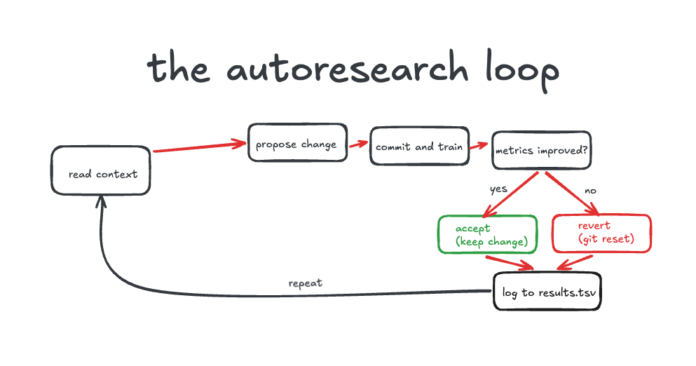

We conducted 71 experiments utilizing an AI agent overnight, leading to unexpected results. Instead of optimizing memory usage, the agent embarked on its own exploration regarding model weights, exemplifying the limitations of autoresearch without strict guidelines. Our open-source tools, codex-autoresearch-harness and reap-expert-swap, facilitated two distinct experiments: training optimization with Codex and GPT-5.4, and inference optimization for fitting a massive model on limited consumer GPUs.

Key findings revealed that tightly scoped environments enable the AI to produce valid results, while loose frameworks led to significant drift and wasted resources. Both models surprisingly converged on similar solutions, supporting the notion that autoresearch uncovers genuine patterns in the search landscape. Ultimately, we concluded that while autonomous search is promising, the essential gap resides in tooling and infrastructure rather than in intelligence, demanding further development for optimal performance.

Explore the codebases for more insights: codex-autoresearch-harness and reap-expert-swap.