Exploring Stability in AI Responses on Political Candidates

As generative AI becomes a vital source of political information, understanding its effectiveness is crucial. Our latest analysis dives into how AI chatbot responses vary when discussing political candidates and elections.

Key Findings:

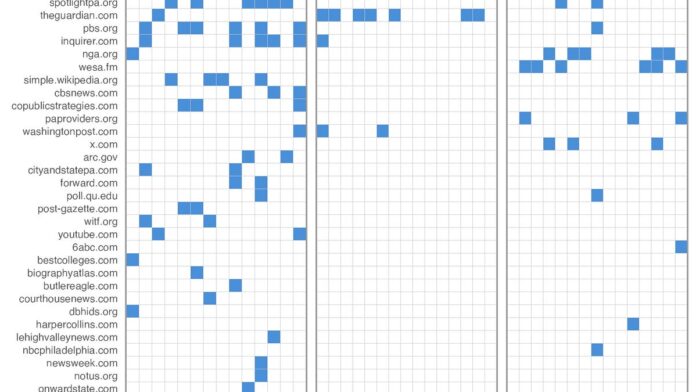

- Response Consistency: Models maintain high semantic similarity (0.94-0.95) but show notable variability in word choice and citations.

- Factors at Play:

- Temperature Setting: Influences randomness in responses.

- Model Implementation: Affects overall response variation.

- Citations Matter: When chatbots cite candidate websites, their responses align more closely with the original content—especially for incumbents.

What’s Next?

Our exploration will continue with further questions about the impact of citations over time and how the information landscape shifts as AI models evolve.

Join the conversation! Share your thoughts on how AI is shaping political discourse. Let’s connect on this transformative journey! 🚀