Understanding Prompt Injection Risks in AI-Powered SaaS

Prompt injection is a growing security vulnerability in AI-driven SaaS environments, allowing attackers to manipulate AI behavior through crafted inputs. Instead of breaching the AI model directly, malicious actors exploit how models interpret commands, leading to unauthorized actions and data exposure. This threat is not theoretical; real-world instances include customer support chatbots divulging sensitive information and AI assistants leaking confidential data via manipulated emails.

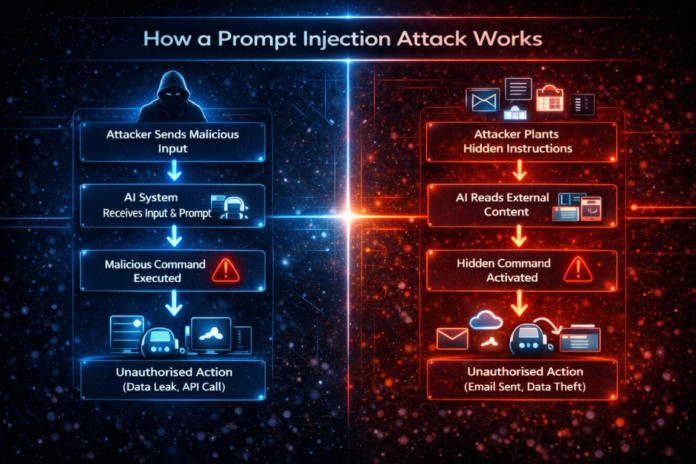

Prompt injection attacks primarily fall into two categories: direct and indirect. Direct attacks provide malicious instructions straight to the AI, while indirect attacks embed harmful commands in external data the AI processes. The proliferation of SaaS tools with expansive permissions amplifies these risks, as successful injections can trigger impactful actions across multiple systems.

To mitigate these threats, organizations should implement strict access controls, input validation, and continuous monitoring of AI behavior. Effective governance is essential in ensuring that AI tools operate securely within enterprise environments, thereby reducing the likelihood of prompt injection vulnerabilities.