Strengthen Your AI Deployments: Addressing Prompt Injection Vulnerabilities

Prompt injection is not merely a benchmarking problem; it’s a critical architectural issue. Anthropic’s Sonnet 4.6 reveals that even with safeguards, the success rate of prompt injections can reach 8% on first attempts in typical environments, increasing to 50% when unbounded attempts are allowed.

Key Insights:

- Lethal Trifecta: The combination of agent tools, untrusted input, and sensitive access makes prompt injection catastrophic.

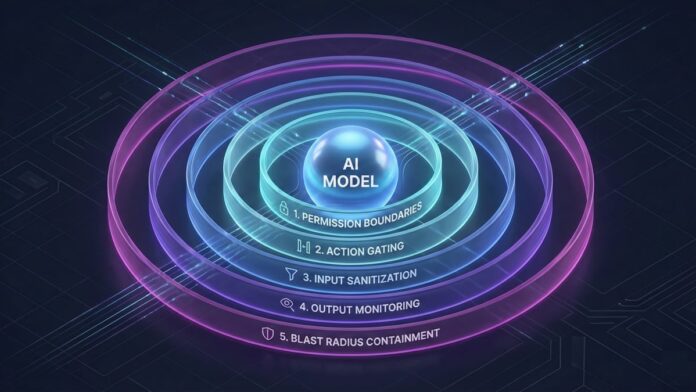

- Five-Layer Defense Model:

- Permission Boundaries: Limit agent capabilities per task.

- Action Gating: Require human review for high-risk actions.

- Input Sanitization: Cleanse inputs before processing.

- Output Monitoring: Track and report anomalous activities.

- Blast Radius Containment: Limit the data exposure of compromised agents.

Understanding and addressing the architextual vulnerabilities are vital for safe AI agent deployment. Build your defenses wisely.

🔗 Join the conversation and share your thoughts on securing AI systems! Your insights matter!