Navigating the Evolving Landscape of AI Benchmarking

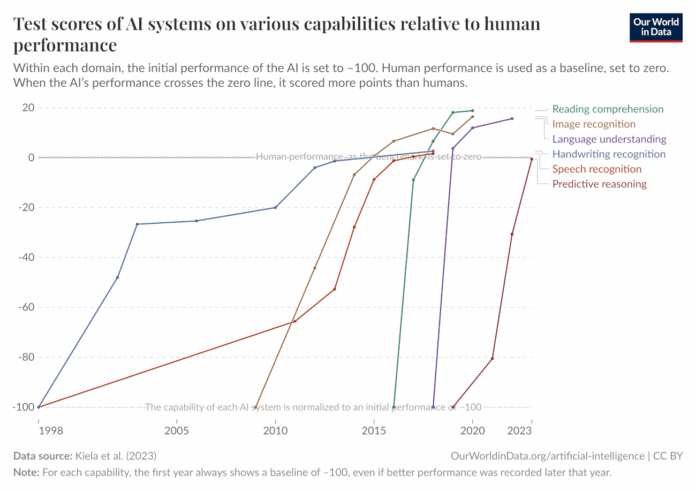

As we step into 2026, the challenge of upper-bounding AI capabilities using fixed benchmarks has intensified. The rapid saturation of AI benchmarks, once considered difficult, showcases the urgency for innovative evaluation methods.

Key Takeaways:

- Benchmark Saturation: High-performing models like Anthropic’s Claude Opus 4.6 have excelled, making traditional benchmarks seem outdated.

- Alternative Methodologies: The need for robust, cost-effective measures has emerged:

- Innovative uplift studies measuring real-world impacts.

- Expert forecasting and opinion elicitation to assess capabilities.

- Third-party risk assessment for unbiased evaluations.

Looking Forward:

Experts emphasize the necessity for a dynamic approach in assessing AI capabilities, as reliance on outdated benchmarks could fail to identify potential risks.

Join the Conversation!

How do you envision the future of AI benchmarking? Share your thoughts and insights below!