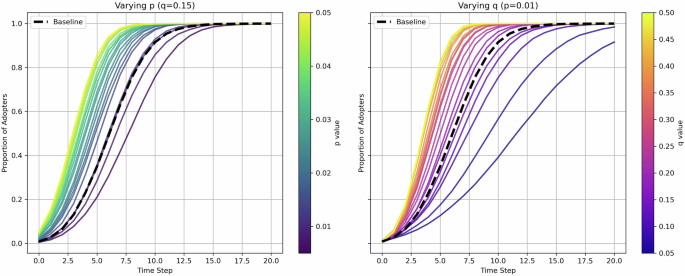

Large Language Models (LLMs), including GPT-1 and Claude2, are gaining popularity for simulating human systems. They generate coherent, human-like text, allowing the development of artificial societies with autonomous agents exhibiting complex social behaviors. This shift from conventional rule-based agents to LLMs fosters narrative-rich simulations, capturing the complexity of human life. However, challenges like hallucinations, bias, and security issues present significant hurdles. Methodologically, LLMs risk making simulations “too human” while compromising their interpretability, resulting in a clash with traditional modeling objectives. A study leveraging the Bass diffusion model highlights that despite LLMs’ expressiveness, they struggle with temporal mismatches and decision-making processes, leading to opaque results and complexities in causal analysis. It’s crucial to find appropriate domains for LLMs, emphasizing contexts where linguistic nuances are prioritized over system-level emergence. As LLM technology evolves, critical exploration into their roles within social simulation remains essential.

Source link

Navigating the Uncanny Valley: The Limitations of Large Language Models in Mimicking Human Systems

Share

Read more