Unlocking the Power of Context Engineering in AI

In the world of AI, perception often diverges from reality. While LLM (Large Language Model) demos may dazzle with instant results, true functionality questions arise when tasks become complex. Understanding the nuances of context engineering is key to bridging this gap.

Key Insights:

- Context Window Limitations: LLMs operate on finite memory, affecting their performance in real tasks.

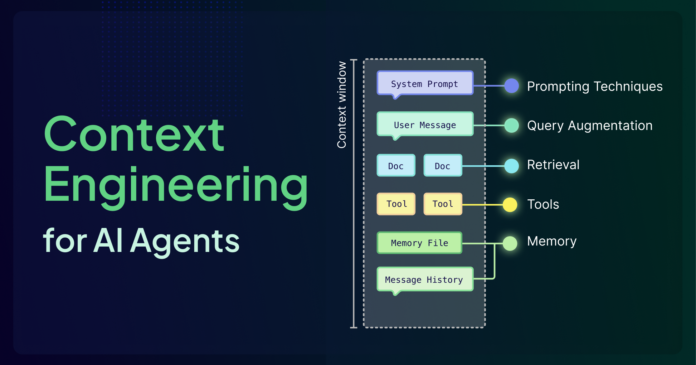

- Engineering Systems: Effective context engineering involves integrating retrieval, memory, and tools to optimize decision-making.

- Six Pillars: Explore components like agents, query augmentation, and memory management that collectively enhance AI reliability.

- Actionable Techniques: Mastering memory architecture and tool orchestration is crucial for transforming flashy demos into dependable systems.

By mastering context engineering, you’ll create AI applications that don’t just respond but intelligently adapt.

👉 Let’s discuss your experiences with context engineering and share insights! 💡 #AI #ContextEngineering #TechInnovation