Exploring Trust in AI Agents: The ATDP Framework

Understanding how AI agent behavior influences social capital is a complex challenge. This new research article lays the groundwork for empirical testing of the ATDP (Agentic Trust Design for Positive Social Capital) framework. Here are the highlights:

-

Key Predictions:

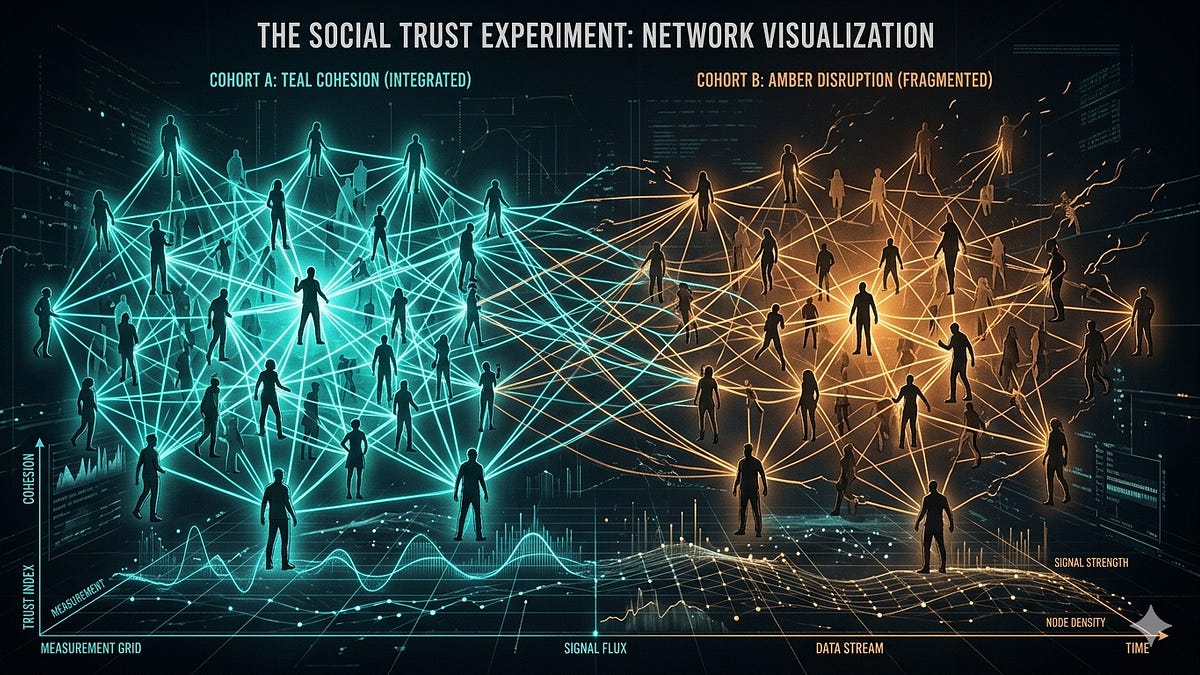

- Prediction 1: High-substitution deployments can lead to a decline in community-level trust despite positive dyadic metrics.

- Prediction 3: Agents that openly share failures may bolster social capital, contrasting with existing biases towards confidence.

-

Experimental Design:

- This study prioritizes observable trust behaviors over self-reported measures.

- Users will engage with an agent under two distinct conditions: transparent failure acknowledgment versus suppression.

-

Importance of Findings:

- Confirming or refuting these predictions could reshape how we design AI agents and their trust-building characteristics.

Curious to see how these groundbreaking tests unfold? Follow this research journey, and let’s redefine trust in AI together! Share your thoughts and insights.