Unlock the Power of AI Data Analysis with MLJAR!

Dive into our groundbreaking AI Data Analyst benchmark that evaluates leading large language models (LLMs) on real Python tasks. Here’s what you’ll discover:

- Comprehensive Evaluation: Compare modern LLMs across multiple domains, including machine learning and finance.

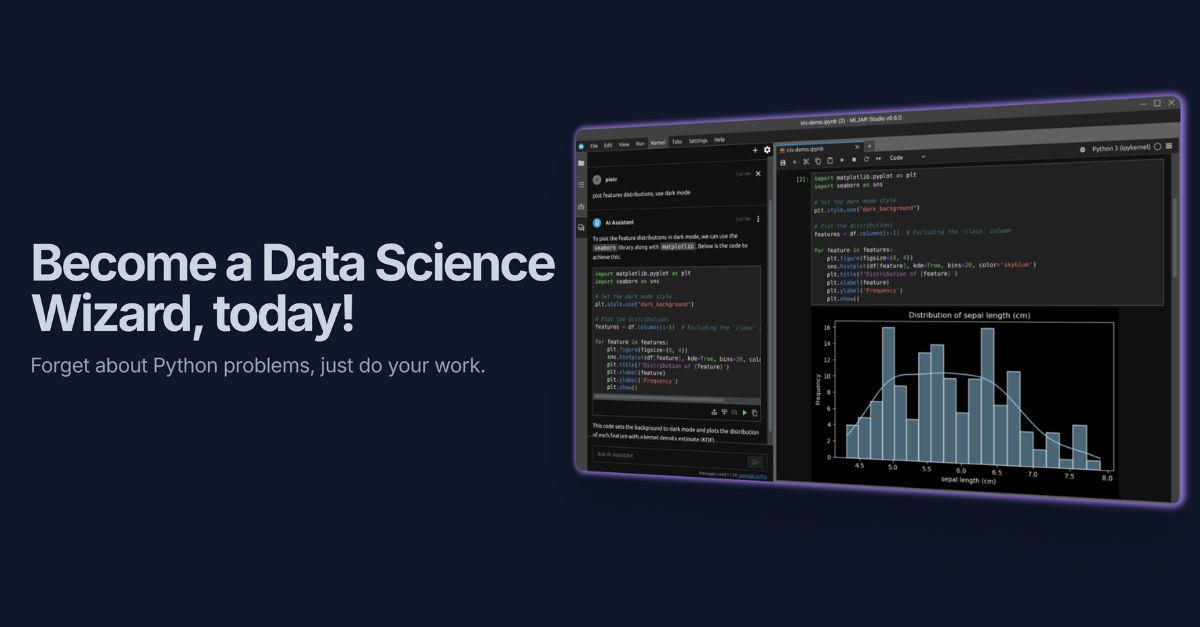

- Interactive Pipelines: Each scenario simulates a data analyst’s workflow step by step, using practical prompts processed in MLJAR Studio.

- Open Access: Explore pipelines and results on GitHub, complete with Python notebook artifacts for easy review.

Key Insights:

- Best overall model: gpt-oss:120b (Score: 9.87/10)

- Most consistent: gpt-oss:120b (STD: 0.45)

- Weakest on complex tasks: qwen3.5:397b (Score: 6.33/10)

Ready to up your data analysis game with AI? 🚀

Check it out, share your thoughts, and let’s innovate together!