Unlock AI’s Potential with TurboQuant: A Game Changer in Memory Management

In the rapidly evolving world of artificial intelligence, Google Research’s TurboQuant algorithm emerges as a pivotal innovation. Designed to enhance generative AI models, TurboQuant reduces memory footprint while boosting performance and maintaining accuracy.

Key Highlights:

- Massive Efficiency: Achieves an 8x performance increase and a 6x reduction in memory usage without compromising quality.

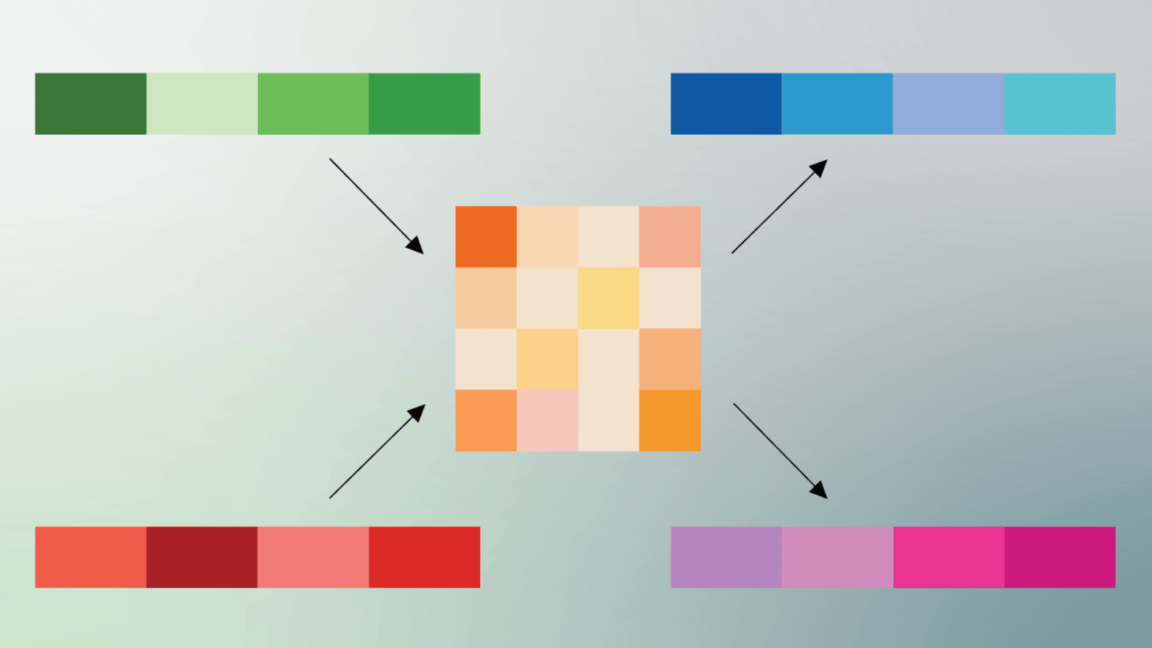

- Smart Compression: Utilizes a two-step process, incorporating PolarQuant to convert standard vectors into polar coordinates, simplifying data storage.

- Enhanced Learning: Keeps essential “digital cheat sheets” intact, allowing models to deliver high-quality outputs based on semantic similarity.

This breakthrough not only makes AI more accessible but also optimizes the technology for broader applications.

Engage with Us! If you’re passionate about AI advancements, share your thoughts and spread the word about TurboQuant!