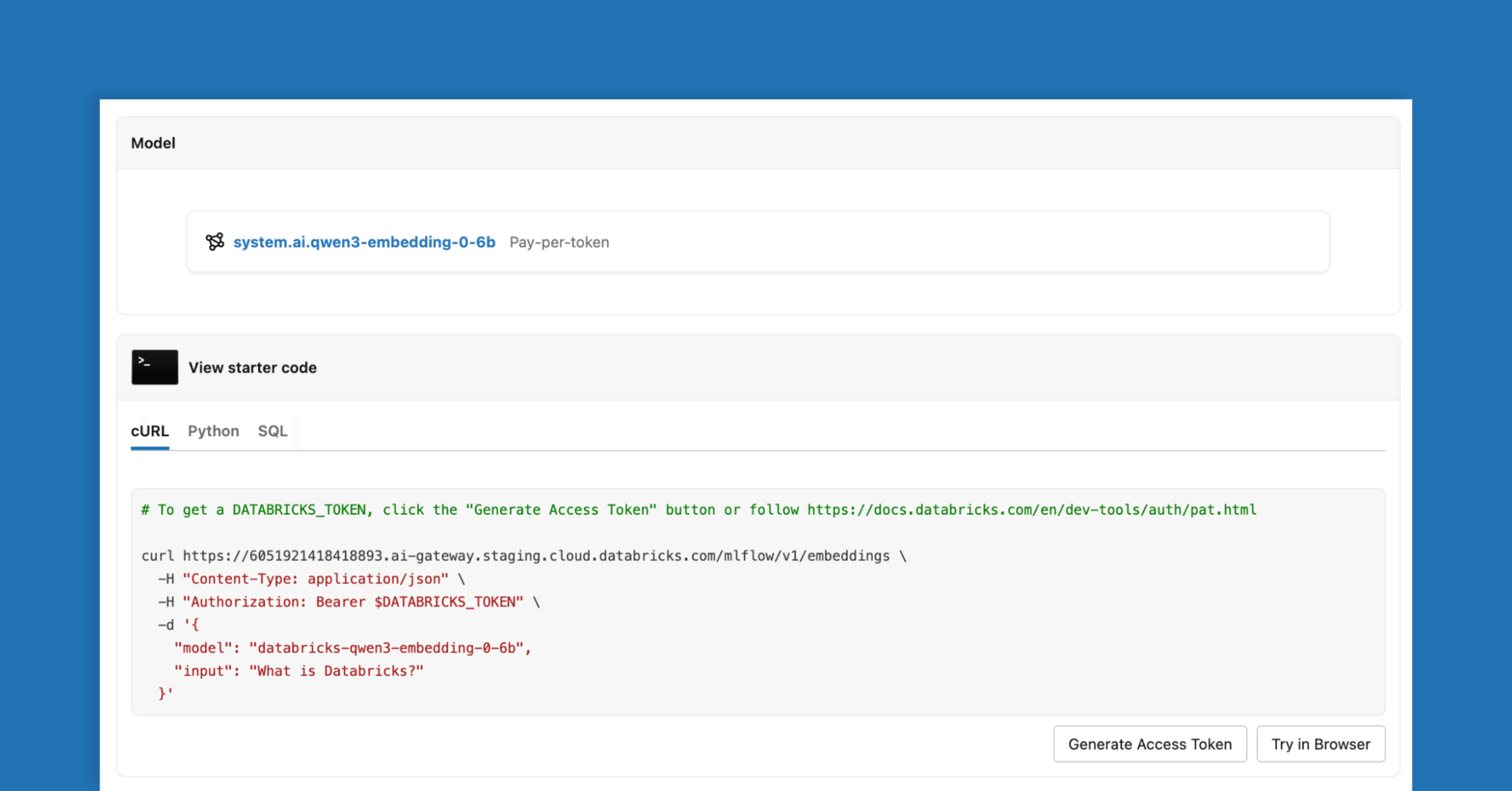

Retrieval is crucial for modern AI systems, heavily relying on the embedding model’s quality for effective data processing. The newly launched Qwen3-Embedding-0.6B on Databricks offers exceptional retrieval performance, multilingual capabilities, and secure serverless deployment. This state-of-the-art model is designed to empower AI agents to access enterprise data within Databricks seamlessly. Built on the robust Qwen3 foundation, it supports a max context length of 32k tokens, enhancing flexibility for document chunking. With its instruction-aware design, teams can tailor models for specific tasks, improving retrieval performance by 1-5%. As the first multilingual embedding model on Databricks, Qwen3-Embedding-0.6B covers over 100 languages, facilitating tasks like cross-language retrieval. The model operates on secure, managed serverless GPUs, ensuring compliance with data residency requirements. Ideal for semantic search and multilingual retrieval applications, Qwen3-Embedding-0.6B is available on Databricks and supports various model serving surfaces, making it a top choice for enterprises.

Source link