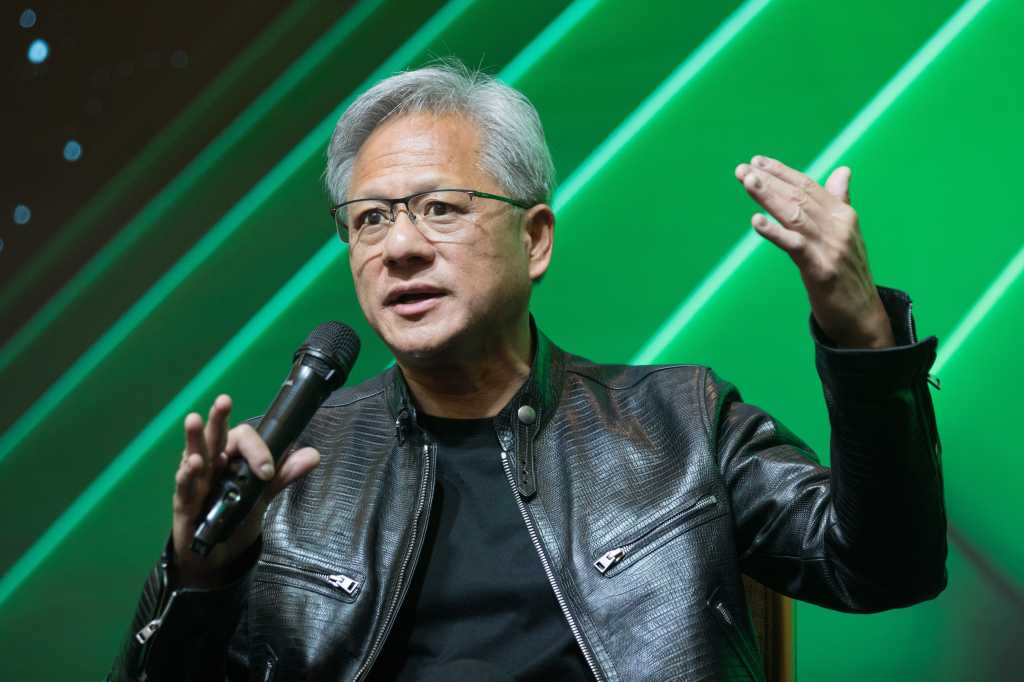

Nvidia’s chips are designed for its AI factories, which generate tokens essential for companies to implement AI strategies. These tokens are linked to revenues calculated as tokens-per-watt, emphasizing that unused power directly translates to lost revenue, according to Nvidia’s CEO, Jensen Huang. At GTC, Nvidia’s focus has largely been on inference over training, with industry analyst Jack Gold noting that inference won’t incur “gigabucks” in token costs. Additionally, while Nvidia asserts that their Vera Rubin system will reduce computing costs, Gold suggests the implications of this claim may be more complex than initially proposed. This highlights the evolving landscape of AI and the critical role of efficient power usage in driving profitability. For businesses looking to optimize their AI capabilities, understanding Nvidia’s advancements can provide valuable insights into cost management and operational efficiency.

Source link