Unpacking AI Efficiency: Insights from My Quantization Journey

In my twelfth post on building scalable business process automation, I dive deep into the intricacies of benchmarking Llama 3 models for real-world applications. Here’s what I explored:

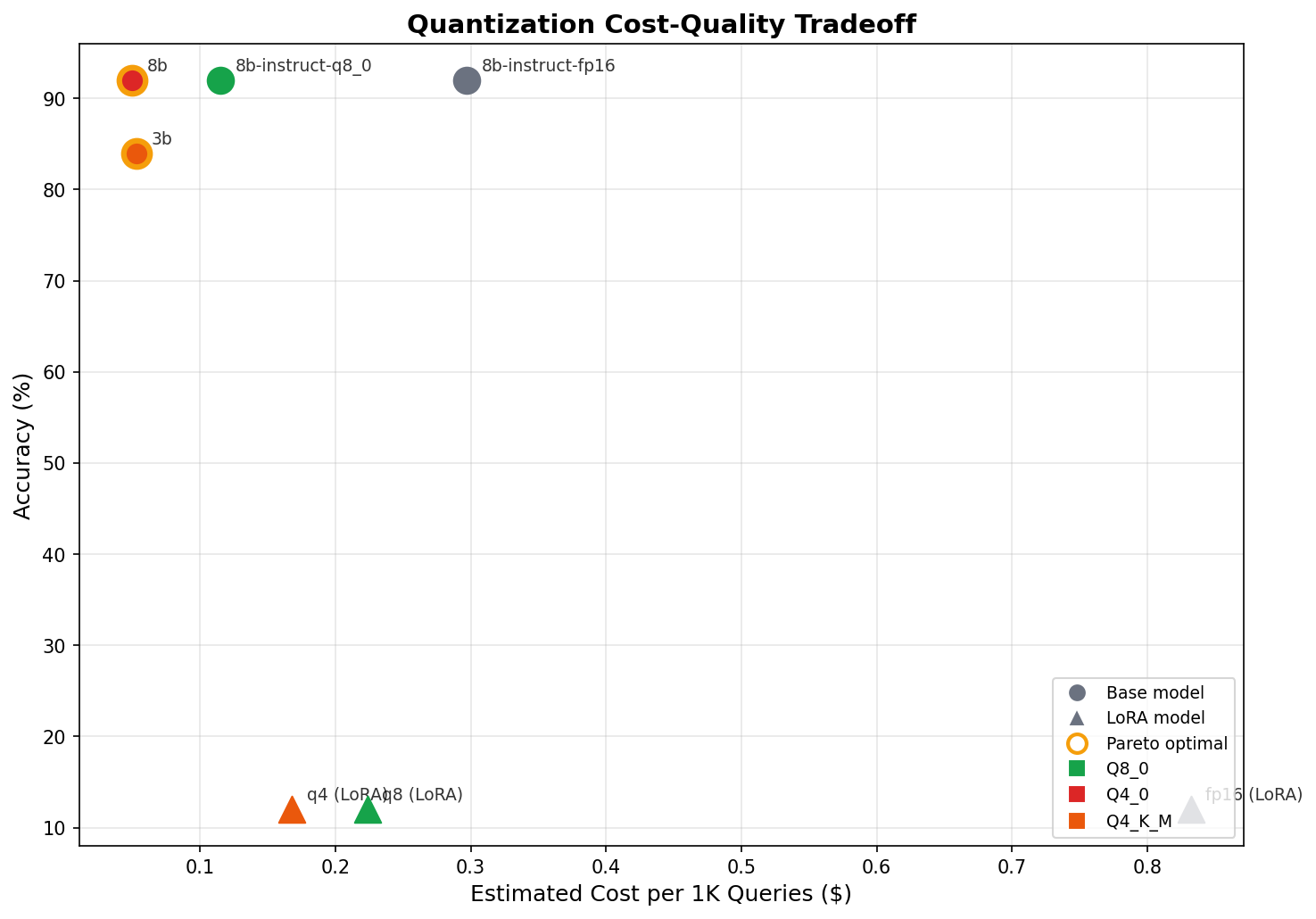

- Quantization Insights: I benchmarked 7 Llama 3 variants, revealing that lower-precision models can achieve 92% accuracy without sacrificing performance.

- Small Models, Big Lessons: The 3B model underperformed, revealing essential trade-offs between capacity and accuracy. Its missteps highlighted the complexities of model reasoning.

- Self-Training Success: I built a self-training pipeline, generating 542 labeled examples with zero manual effort — proving cost-effective training data is crucial.

- Fine-Tuning Failures: My experience with QLoRA fine-tuning underscored that aggressive adjustments can lead to catastrophic forgetting, teaching us valuable lessons in model adaptability.

What’s next? Enhancing our approaches to refining model strategies and improving supporting architectures to ensure durability.

🔗 Curious about automating your processes or exploring AI optimization? Let’s connect and discuss!