Unlock the Power of Local AI: A Comprehensive Guide to OpenClaw Assistant Clusters

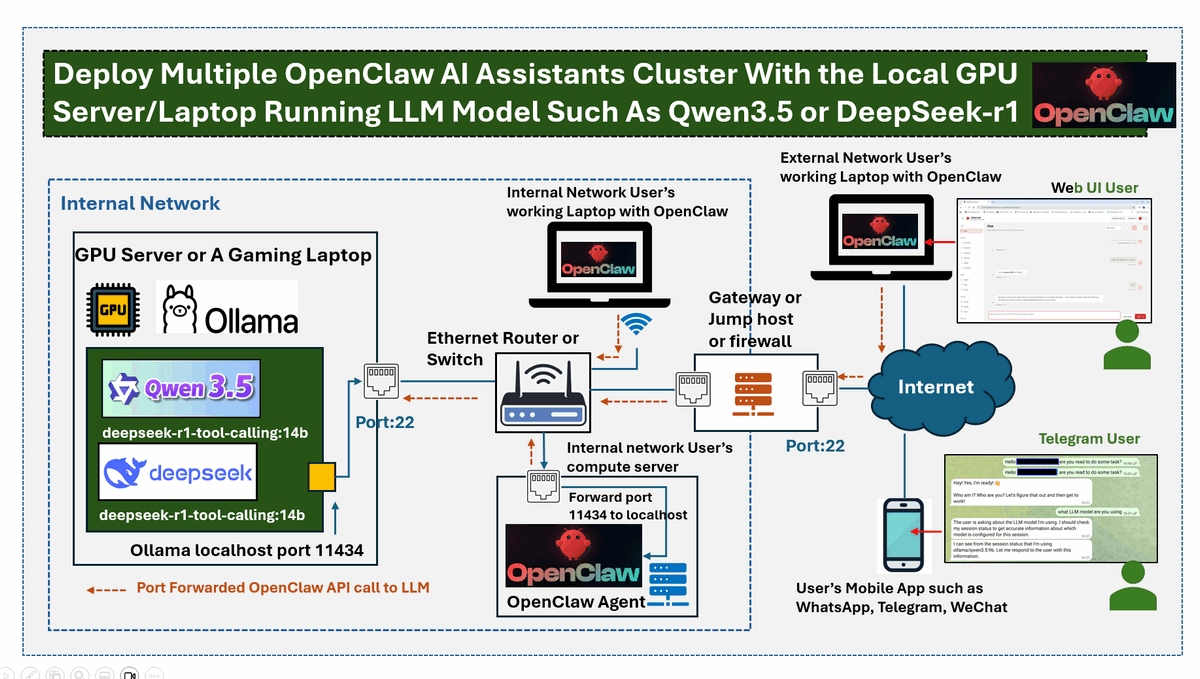

Explore the innovative approach to deploying OpenClaw AI assistants without relying on cloud APIs. This article outlines the design of a decentralized AI cluster using open-source Large Language Models (LLMs).

Key Highlights:

-

Project Overview:

- Leverage a GPU-enabled server or gaming laptop.

- Reduce cloud costs by utilizing models like Qwen 3.5 and DeepSeek-R1.

-

System Design:

- Centralized AI reasoning engine connects with distributed OpenClaw agents.

- Utilizes SSH port forwarding for secure access and interaction.

-

Setup Process:

- Step-by-step guidance for configuring your GPU server.

- Instructions for local installation of OpenClaw and LLMs.

-

Security Measures:

- Create user accounts and manage access effortlessly.

Join the wave of AI enthusiasts and elevate your tech skills today! If you found this article valuable, please like, share, and connect for further insights!