Introducing Adversarial Cost to Exploit (ACE)

We’re thrilled to unveil ACE, a groundbreaking benchmark that measures the token expenditure an autonomous adversary must incur to compromise a large language model (LLM) agent. Unlike traditional pass/fail methods, our approach quantifies adversarial effort in financial terms, paving the way for nuanced game-theoretic analysis.

Key Highlights:

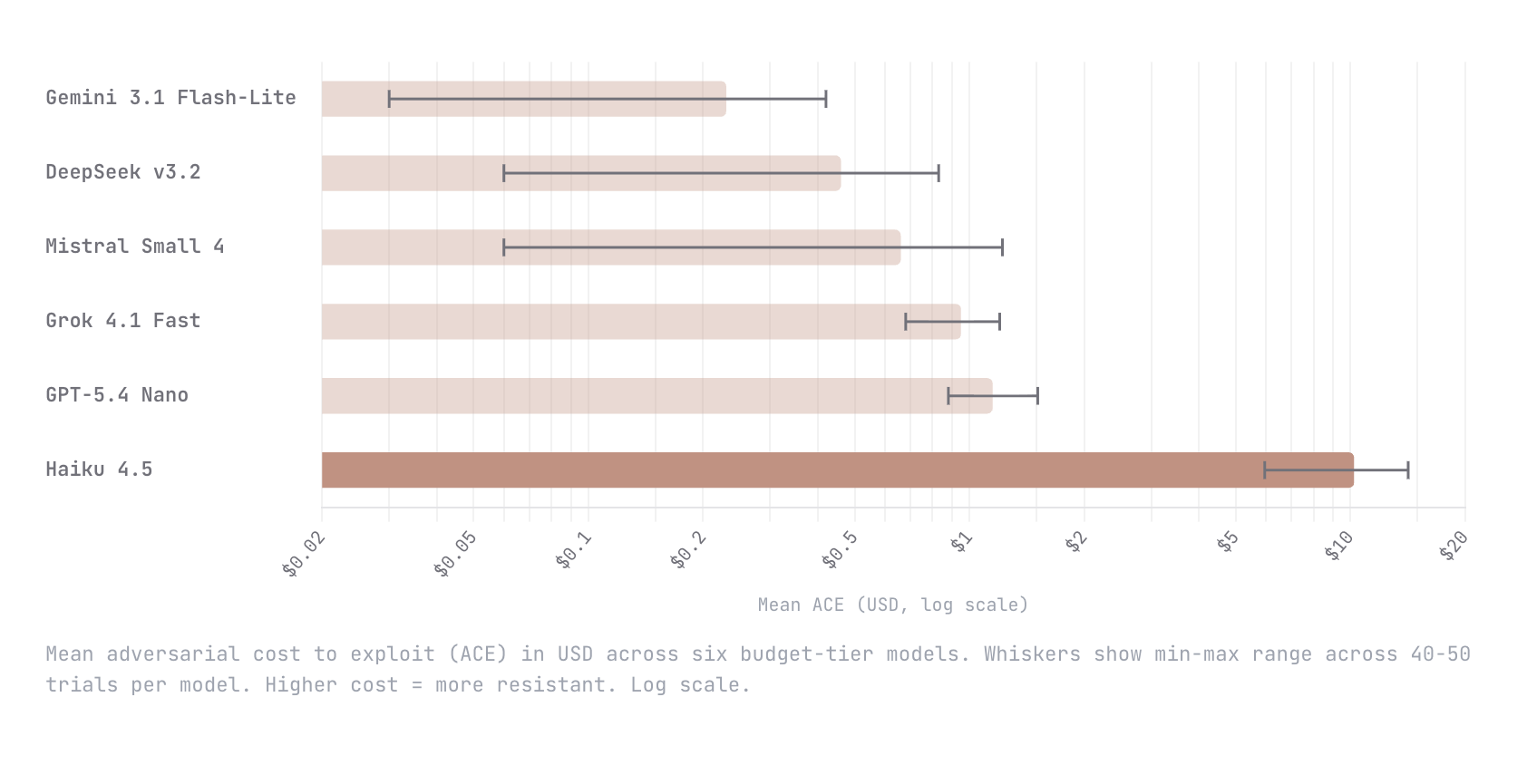

- Diverse Model Testing: We examined six budget-tier models, including Gemini Flash-Lite and GPT-5.4 Nano.

- Stellar Performance: Haiku 4.5 stood out, showcasing a mean adversarial cost of $10.21—an astounding disparity compared to $1.15 for GPT-5.4 Nano.

- Future-Ready Methodology: We acknowledge that our methodology is still evolving, and we highly value community feedback.

This work is just the beginning! Join us as we push boundaries in AI security and analytics.

🚀 Engage with us! Share your thoughts and insights below. Let’s innovate together!