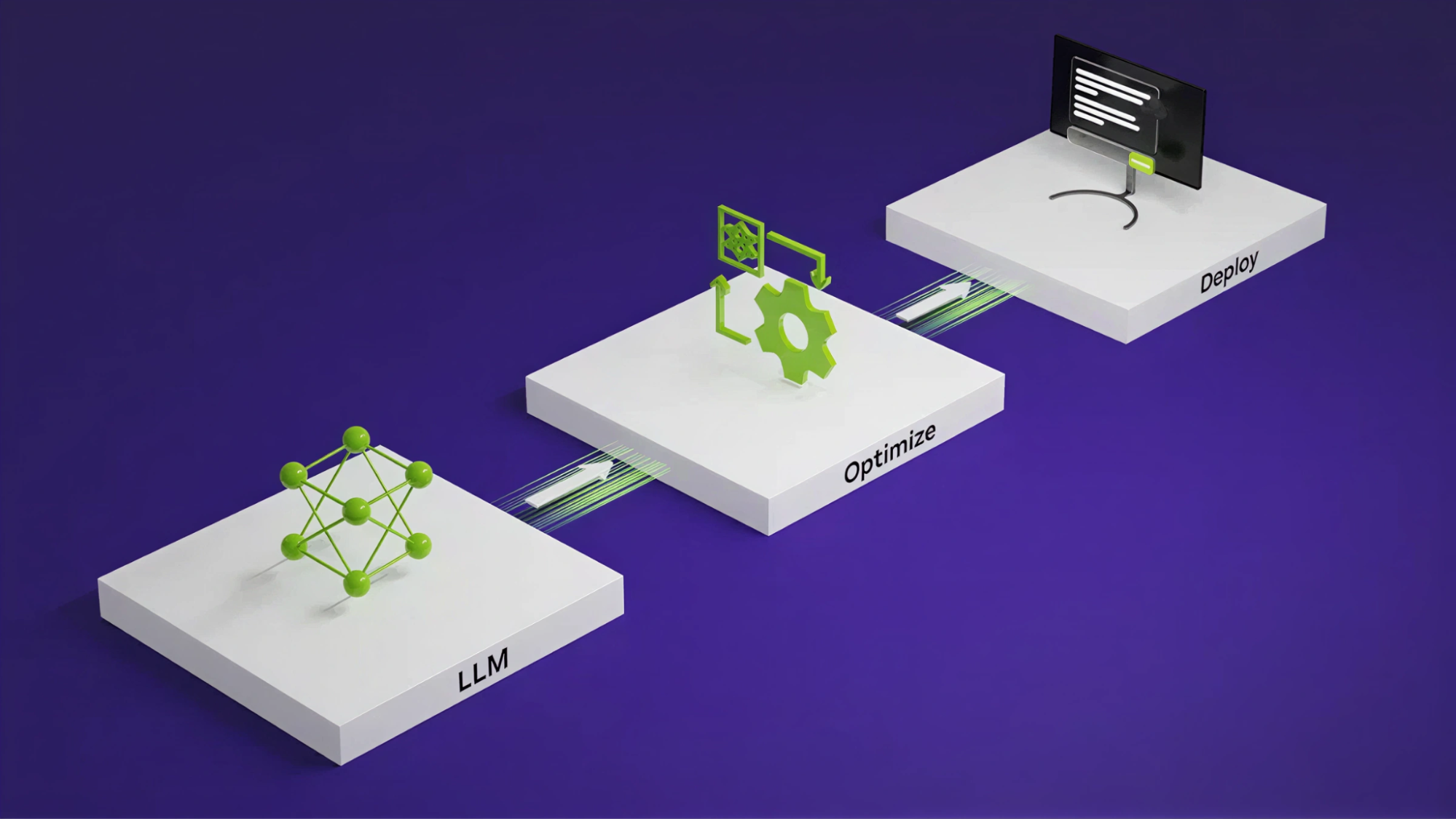

NVIDIA’s TensorRT LLM has launched AutoDeploy, a beta feature designed to streamline the deployment of large language models (LLMs). This innovative tool automates the compilation of standard PyTorch models into high-performance inference graphs, significantly reducing manual optimization efforts. With AutoDeploy, developers can focus on model creation while the system handles inference-specific tasks like caching and sharding. This compiler-driven approach is ideal for experimental architectures and less common models, allowing rapid deployment with competitive baseline performance.

AutoDeploy also supports over 100 text-to-text models and provides essential features such as seamless model translation, inference optimization, and a unified training-to-inference workflow. Early implementations, like the NVIDIA Nemotron models, demonstrate AutoDeploy’s capacity for quick onboarding and impressive performance, making it a game-changer in the LLM deployment landscape. For those interested in TensorRT LLM and AutoDeploy, comprehensive documentation and examples are available for exploration.

Source link