Exploring AI Alignment: Beyond Human Interests

AI alignment is crucial not just for human preferences but also for the welfare of non-human beings. Recent discussions indicate that our efforts in AI safety research must extend to consider animals and future digital minds. Here’s a high-level overview of the considerations:

-

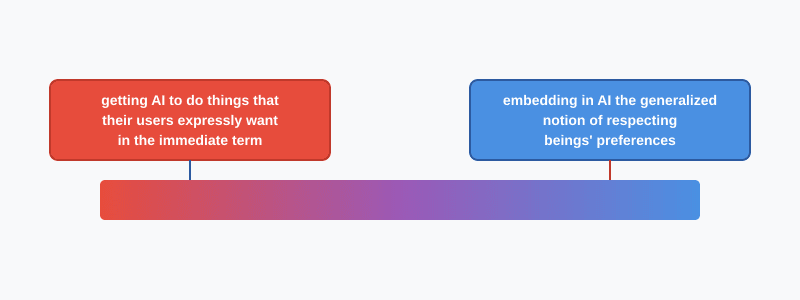

Alignment Spectrum: Techniques range from ensuring AI follows human preferences immediately to embedding respect for broader beings’ rights.

-

12 Categories of Research:

- Best for Non-Humans: Alignment Theory & Multi-Agent Cooperation.

- Orthogonal Aspects: Control, Evals, Goal Robustness.

- Potential Harm: Iterative Alignment (e.g., RLHF).

Understanding these categories helps shape a more ethical AI future. While the query remains if current research matters, engaging with these ideas may offer a pathway to improved non-human welfare in AI developments.

🚀 Join the conversation! Share your thoughts on the role of non-human welfare in AI alignment.