Unlocking True Efficiency in AI Inference

Over the years, AI systems have achieved remarkable advances in inference efficiency, driven by improved hardware and innovative techniques. However, the reality is complex:

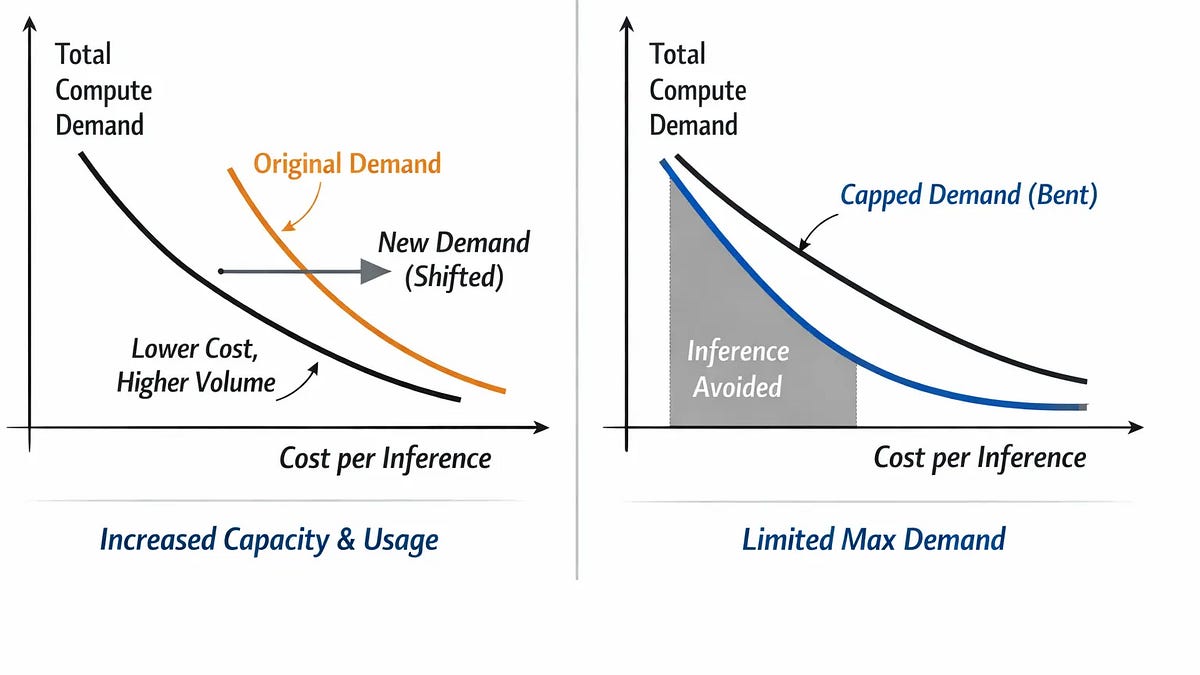

- Rising Demand: Despite efficiency gains, overall compute demand continues to skyrocket as more applications emerge.

- The Hidden Assumption: Many optimization strategies assume inference is mandatory, leading to rebound effects where increased efficiency invites more usage.

This essay explores a paradigm shift: viewing inference as conditional rather than automatic. Key insights include:

- Conditional Inference: By treating inference as an authorized action, we can decrease unnecessary executions.

- Restructured Demand: This approach reduces overall demand and saves resources, unlike traditional methods which merely lower costs.

Understanding this framework can redefine efficiency strategies. It’s time to rethink how we approach AI inference to create true, lasting efficiencies.

📈 Let’s discuss! What strategies do you think can help cut down unnecessary inference? Share your thoughts!