Unlocking the Memory Bottleneck in AI: Google’s TurboQuant

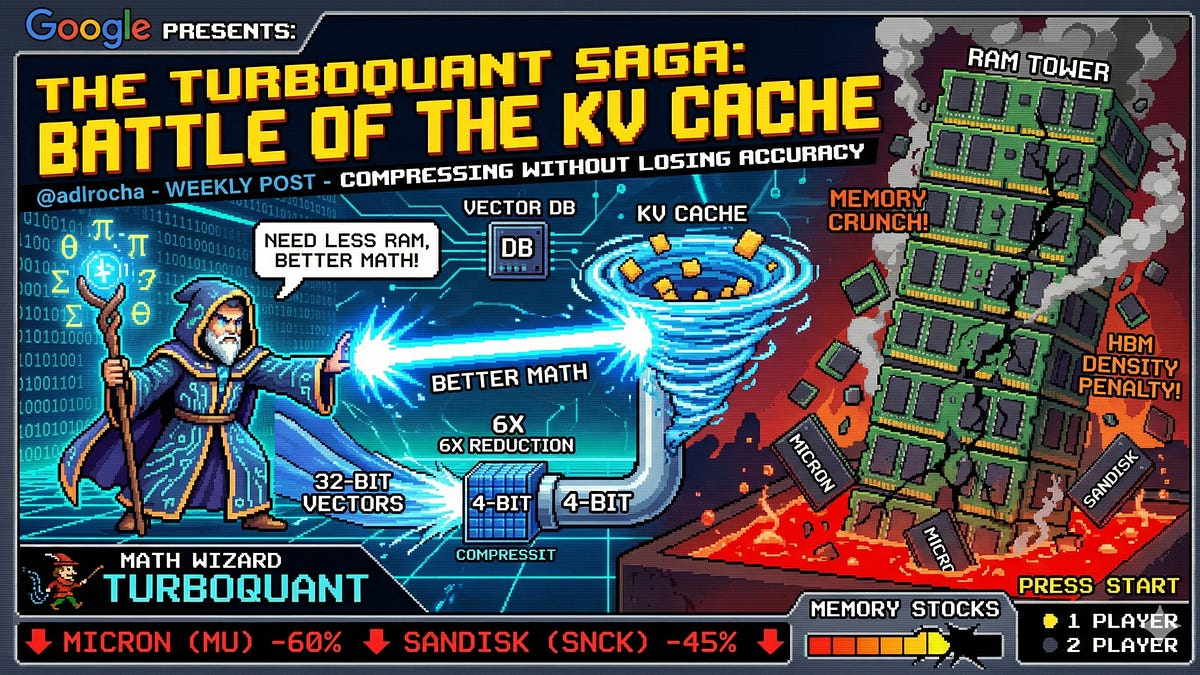

Last week, I explored the growing challenges in AI memory, particularly focusing on hardware issues like HBM density and DRAM prices. This week, Google introduced TurboQuant, a revolutionary approach aiming to reduce memory requirements rather than merely increasing capacity.

Key Insights:

-

TurboQuant Overview: A two-stage algorithm designed to compress information efficiently.

- Stage 1: PolarQuant transforms data to polar coordinates for efficient compression.

- Stage 2: QJL corrects errors without additional memory overhead.

-

Impact on AI Models:

- 6x reduction in KV cache size without accuracy loss.

- Potential to enhance on-device inference, making powerful AI more accessible.

-

Market Reactions:

- Significant stock drops for memory companies like Micron following the announcement reflect potential shifts in AI resource economics.

Broader Implications:

- TurboQuant isn’t just for LLMs. It could revolutionize fields like recommendation systems and genomics.

Stay tuned as I delve into how TurboQuant may reshape the AI landscape! 🚀

💬 What are your thoughts on TurboQuant? Share below or connect to discuss more!