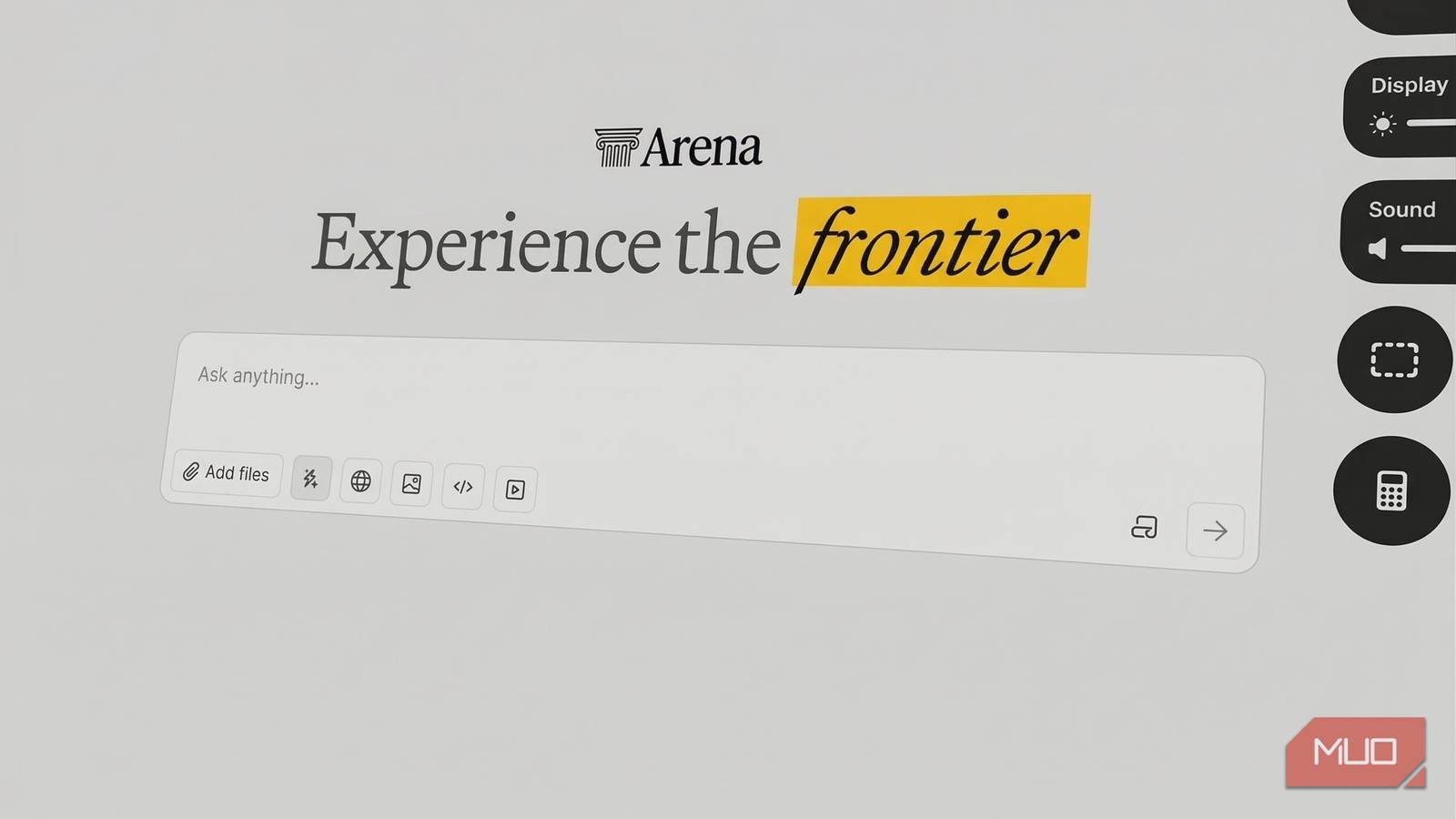

When choosing the best AI chatbot, opinions often stem from subjective experiences or unchecked benchmarks. To find a truly effective chatbot for real tasks, I utilized Chatbot Arena, a free tool that enables unbiased testing. This platform allows users to compare AI models side by side without knowing which one they are assessing, thus avoiding brand bias.

I ran 40 blind match-ups based on writing tasks I frequently encounter: drafting, summarizing research, generating headlines, and simplifying complex topics. The results consistently showed Claude Opus 4.6 as the top performer, with Gemini 3.1 Pro Preview as a close contender.

For effective outcomes, I recommend using actual work prompts and conducting multiple match-ups per task category. While Claude excelled in performance, the landscape of AI chatbots is dynamic; ongoing testing using Chatbot Arena ensures you stay updated on which model best fits your evolving needs as advancements occur.

Source link